- Live visuals unity tutorial install#

- Live visuals unity tutorial upgrade#

- Live visuals unity tutorial full#

- Live visuals unity tutorial software#

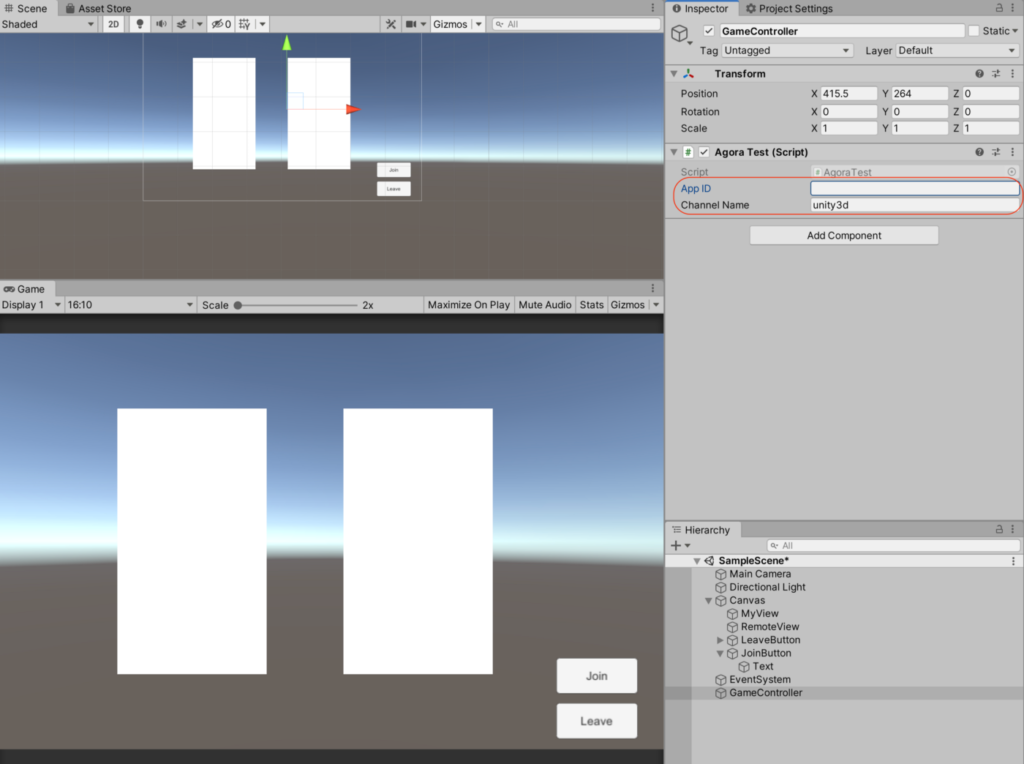

Next we need to configure this camera to label our objects' ground truth from its point of view. Extending your Main Camera to a Perception camera. Click Add Component and find Perception Camera in the dropdown list.

To do this, click on Main Camera from the Hierarchy to focus it in the Inspector. Next we need to setup our scene's Main Camera with some extra functionality so it can work with our Perception package. Double click the newly created scene to focus it in your Hierarchy. Click the + in the Project window and select Scene then name it TutorialScene. Now let's create a scene to contain our assets. We've now performed all the setup to use this project to generate synthetic 3D training data for our object detection model. Find the ForwardRenderer and enable Ground Truth Renderer Feature Creating a Simulation Use the search bar on this Project pane to find the ForwardRenderer then click Add Renderer Feature -> Ground Truth Renderer Feature to process the assets for use as synthetic data objects. Sample files included with the Perception package. The Tutorial Files come with sample models and textures for products like tea and cereal that you can use to build a retail store item detection model. Once it's finished you'll find Perception as an option to Import into Project.

This will download and import the package which can take a few minutes.

To do this go to Window > Package Manager then click the + button at the top left of the Package Manager and type and click Add.

Live visuals unity tutorial install#

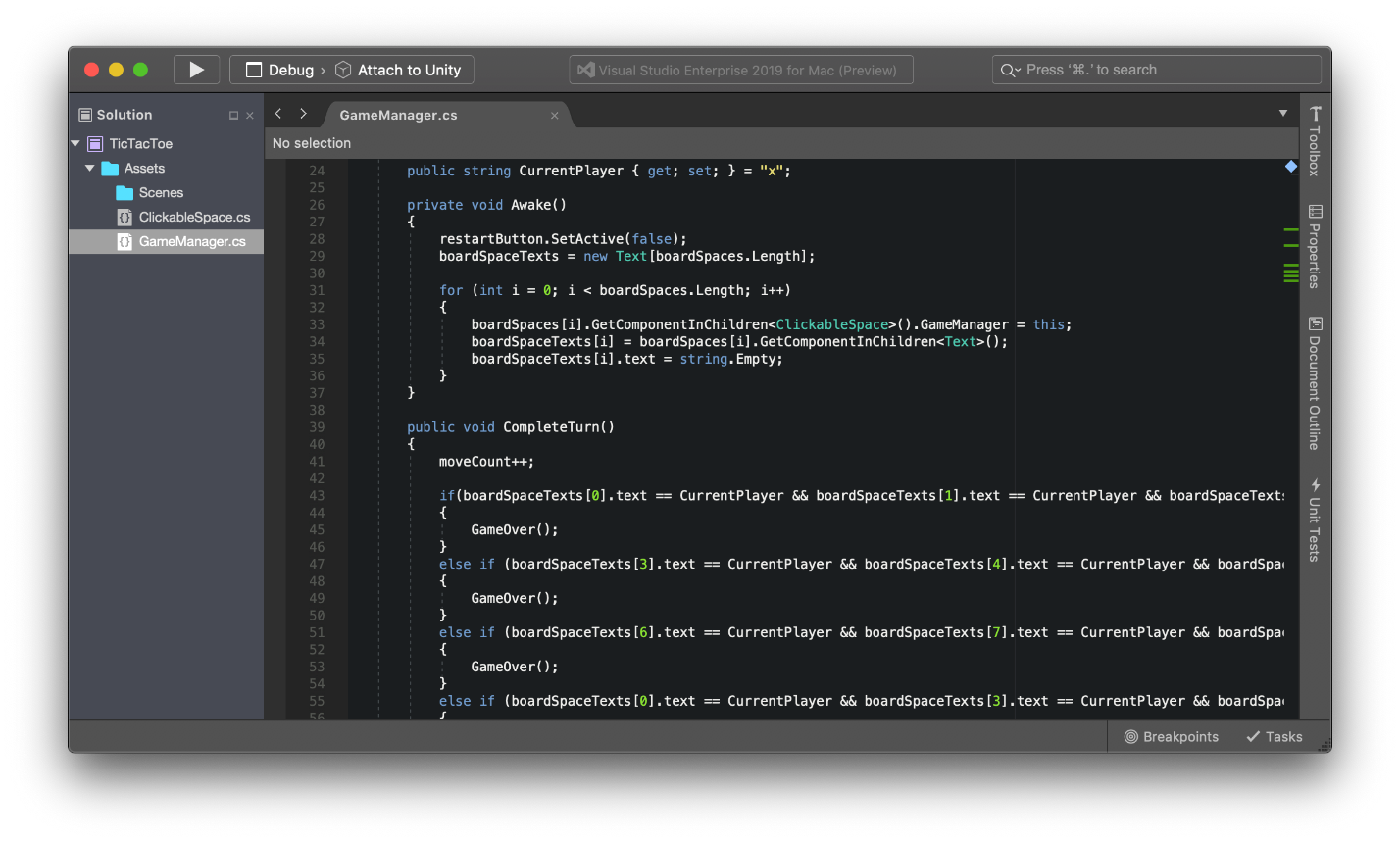

Next we need to install the Perception Package which is what we will use for generating synthetic training data. You can call your project Roboflow Synthetic Data Tutorial and choose a location on your hard drive to store the project. Once it's done, open your new Unity installation and create a new project starting from the Universal Render Pipeline template. Add Visual Studio and Linux Build Support (Mono) to the installation.Īfter you've accepted the license, Unity will take a few minutes to download and install. In the installation options, be sure to select Visual Studio and Linux Build Support (Mono) which we will need later. Install Unity to your computer via Unity Hub Once you have Unity Hub installed and activated, click "Installs" on the left and follow the process to install Unity 2019.4.18f1 (LTS) – this is the current "Long Term Support" version of Unity and is known to work with Perception and the rest of this tutorial. Sign in to Unity Hub and activate your Unity license (this is in Preferences > License Management > Activate New License).

Live visuals unity tutorial upgrade#

Since Perception is in active development we'll want flexibility to upgrade or downgrade our Unity version easily as the package matures.

Live visuals unity tutorial full#

You can check the full criteria here then create an account here.Īfter signing up we need to install Unity Hub, a management tool that will allow us to switch between versions of Unity. You'll need a Unity account and license to get started this is free for hobbyists or those working at companies that had less than $100k revenue last year. The more closely your generated images match the scenes your model will see in the wild the better. You can then add in real world data to improve your model over time. Synthetically generated data can be a great way to bootstrap a project and get a prototype running.

Live visuals unity tutorial software#

In this post, we'll show another technique: using Unity's 3D engine (and their Perception package) to generate perfectly annotated synthetic images using only software and then using that generated data to train a computer vision model. In the past we have covered techniques such as augmentation, context synthesis, and model assisted labeling to improve the process. The promise of generating synthetic data to reduce the burden is alluring.

Collecting images and annotating them with high-quality labels can be an expensive and time-consuming process.